New paper on uncertainty-aware RL, to appear in RA-L.

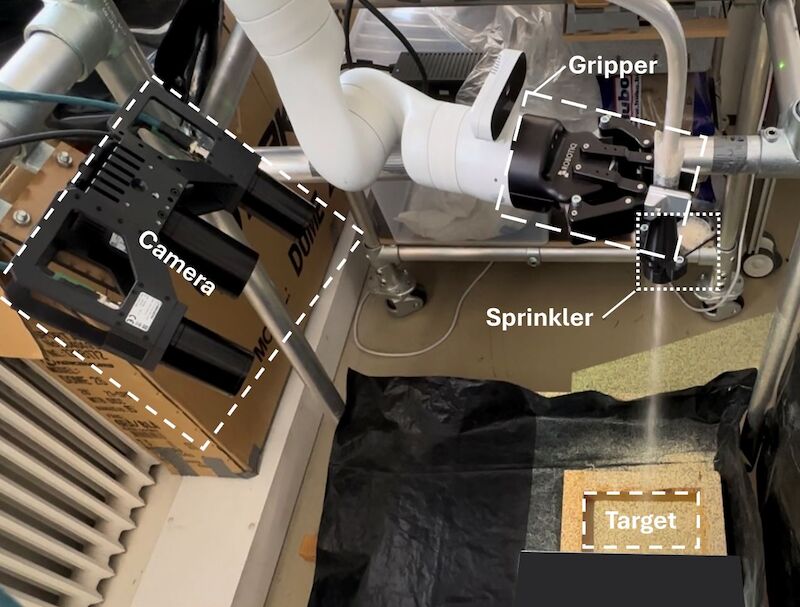

How can robots learn effectively in the real world when their vision is noisy, occluded, or incomplete? Our latest RA-L publication (Du et al., 2025) introduces AREPO, a reinforcement learning method that quantifies and adapts to uncertainty—unlocking robust decision-making in visually-challenging environments!

✅ Denoised latent representations: We train a VAE to learn, from noisy image inputs, a denoised (latent) representation of the system and a clear and reliable reconstruction of its state.

✅ Uncertainty estimation via Monte Carlo sampling: We quantify epistemic uncertainty by propagating latent variance through the decoder, enabling more informed decision-making.

✅ Uncertainty-guided RL exploration: The estimated uncertainty dynamically modulates maximum entropy RL, prioritizing actions that reduce epistemic uncertainty while improving sample efficiency.

🔬 Results: AREPO achieves state-of-the-art generalization in a high-occlusion industrial task, significantly outperforming standard domain randomization, ensemble RL, and model-based planners in zero-shot sim-to-real transfer.

Bib

Bib DOI

DOI PDF

PDF